LREC 2022 (Oral)

Jun 2022 »

Modality Alignment between Deep Representations for Effective Video-and-Language Learning

Hyeongu Yun*, Yongil Kim*, Kyomin Jung

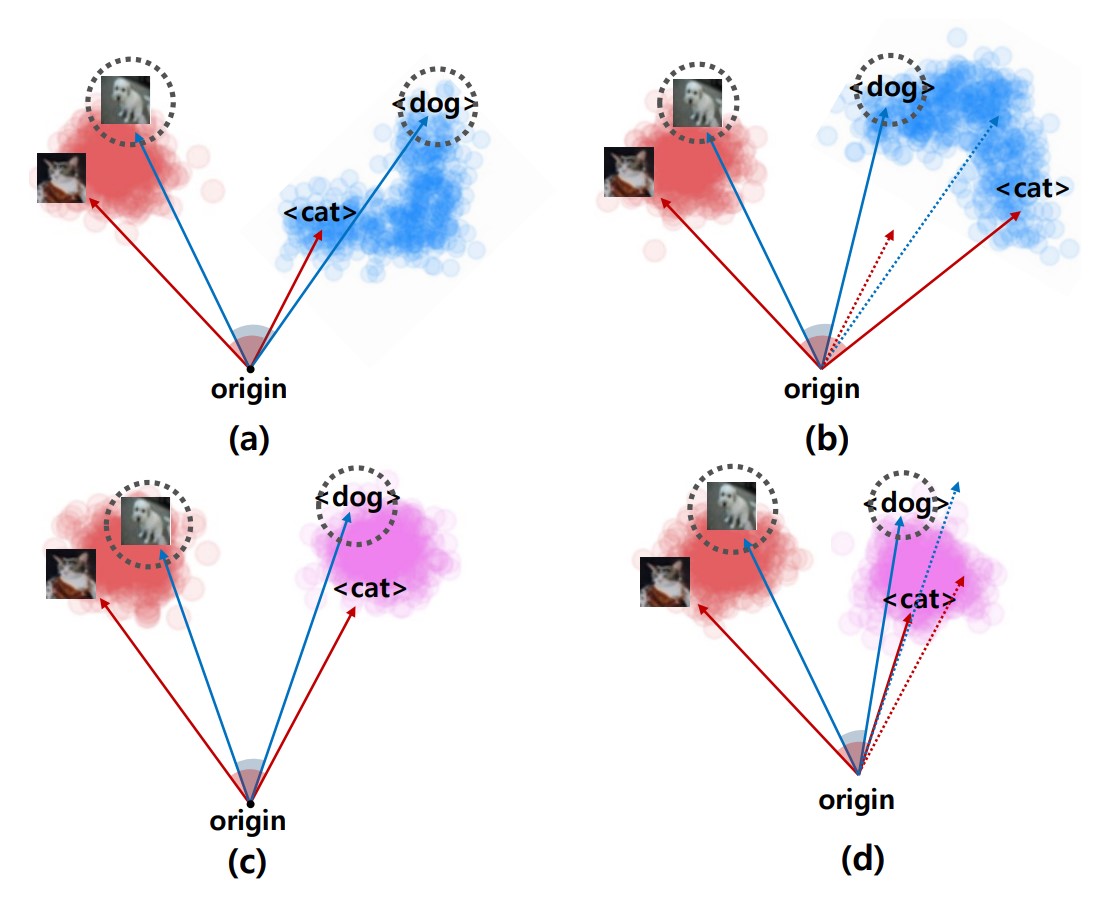

Abstract: Video-and-Language learning, such as video question answering or video captioning, is the next challenge in the deep learning society, as it pursues the way how human intelligence perceives everyday life. These tasks require the ability of multi-modal reasoning which is to handle both visual information and text information simultaneously across time. A cross-modality attention module that fuses video representation and text representation takes a critical role in most recent approaches. However, existing Video-and-Language models merely compute the attention weights without considering the different characteristics of video modality and text modality. In this paper, we propose a novel Modality Alignment method that benefits the cross-modality attention module by guiding it to easily amalgamate multiple modalities. Specifically, we exploit Centered Kernel Alignment (CKA) which was originally proposed to measure the similarity between two deep representations. Experiments on real-world Video QA tasks demonstrate that our method outperforms conventional multi-modal methods significantly.